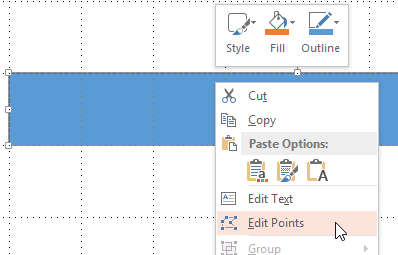

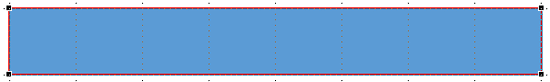

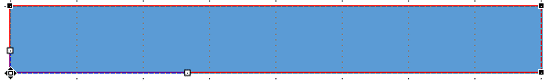

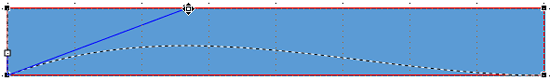

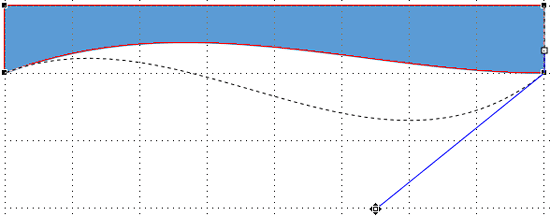

Learn how to create curved shapes in PowerPoint 2013 for Windows. You can create a curved shape by dragging just one or two points.

Author: Geetesh Bajaj

Product/Version: PowerPoint 2013 for Windows

OS: Microsoft Windows 7 and higher

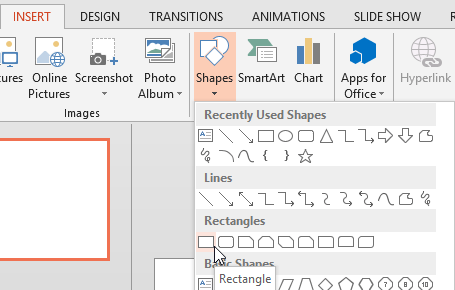

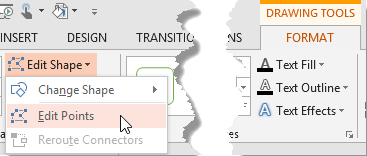

PowerPoint provides an extensive array of built-in shapes which help you create great looking graphics for your slides. You can manipulate these graphics by dragging their yellow squares or combining them. But at times, you may not achieve the exact appearance you want. For instance, you might want a little curve in your shape edges rather than conventional straight lines. PowerPoint does allow you to tweak and make your shape look more organic than geometric curved lines:

See Also:

Drawing Common Shapes: Creating Curved Shapes in PowerPoint (Index Page)

Creating Curved Shapes in PowerPoint 2011 for MacYou May Also Like: In Praise of Analogies | Giraffe PowerPoint Templates

Microsoft and the Office logo are trademarks or registered trademarks of Microsoft Corporation in the United States and/or other countries.